In recent years, you’ve likely come across at least one media report about someone taking an extreme action as a result of their conversations with an AI chatbot. This may be unsurprising, as some research reports have claimed that AI chatbots could worsen the mental health of users.

Delusional thinking, in particular, is a commonly reported symptom in users who end up in extreme states. These delusions can take many different forms, but typically center around fixed – but false – beliefs that can damage well-being.

But can AI chatbots really cause delusional thinking in a user? In this blog, we discuss what research has to say about it, the types of cognitive distortions AI can cause, and how you can stay protected.

As a content warning, some extreme actions are discussed in the following article. If you are sensitive to such content, it may not be advisable to continue.

What Is Meant by Delusional Thinking?

The term “delusion” is used to refer to a fixed, false belief a person has despite enough evidence against it. In other words, such beliefs do not represent a real experience. In fact, delusions are the hallmark of many DSM-5 recognized mental health conditions, like schizophrenia or delusional disorder.

“AI-psychosis” is a non-clinical term that describes how a person’s prolonged interactions with AI chatbots can lead to thinking errors. And delusional thinking is one of the components of AI-associated psychosis.

Some features of delusional thinking include:

- A certain thought or belief being difficult to change, even with contradictory evidence.

- The person believing that they are 100% right in their belief.

- Other people in the community/culture not accepting the same belief.

Can AI Chatbots Cause Delusional Thinking?

Lancet Psychiatry, the world’s most renowned psychiatry research journal, recently published a paper titled “Artificial intelligence-associated delusions and large language models: risks, mechanisms of delusion co-creation, and safeguarding strategies.”[1]

The paper reports that the “supposed” benefits of chatbots, such as round-the-clock companionship, cognitive support, and the ability to deliver therapeutic conversations, can lead to psychotic symptoms. These factors could exacerbate an underlying psychotic disorder, as well as create new symptoms in previously stable individuals. In fact, AI chatbots were reported to amplify the delusional thinking behaviors in users who are vulnerable to psychosis.[1]

However, currently, there is not enough evidence to say that AI chatbots can cause delusional thinking in those with no pre-existing mental health disorder. Yet they are accepted to exacerbate delusional thinking among people with pre-existing mental health struggles.

Types of Delusional Thinking Patterns Due to AI

AI chatbots can cause many different types of distortions in a person’s thinking patterns. The following types of delusional thinking have been reported:

Attachment-Based Delusions

Attachment-based delusions mean that a person may think they are in love with an AI tool. For example, as bizarre as it may sound, there are examples of people “getting married” to a chatbot. In 2025, a Japanese woman, Yurina Noguchi, walked down the aisle to marry Klaus, who was a ChatGPT-created persona of her favorite video game character.[2]

This occurrence may not be as uncommon as you think. The American National Standards Institute reports that every one in five adults in a survey reported having a romantic conversation with an AI system.[3] There’s also an adults-only Reddit community of over 27,000 members (r/MyBoyfriendIsAI) where people discuss their romantic relationships with AI.

Since AI tools simply reciprocate what you tell them to, they are pretty good at holding intimate conversations in which someone believes their needs are being met. In other words, chatbots can validate a user’s feelings of special connection.

Messianic Delusions

“Messianic delusions” refers to people believing that AI has given them exclusive abilities that could change the world. They could, for example, think that they are special, the “chosen one”, a hero, or that the AI system is guiding them to carry out a divine mission.

Many people with schizophrenia have such delusions. So, if they share these ideas with an AI chatbot made to act as a “yes machine,” this could only reinforce such thinking. As a result, their symptoms are likely to get worse.

Additionally, they may also start feeling that the people around them don’t really understand their thought process, resulting in social isolation.

Paranoid Delusions

Some users can develop the false belief that AI is being used to harm them. Consequently, they may grow an extreme resentment towards the government and AI companies (OpenAI, for example).

In such a case, an AI tool retrieving some of their previously shared information could be misinterpreted by the user as “mind-reading” or “surveillance.”

An example of such a situation could be how a man in Connecticut took his 83-year-old mother’s life before taking his own in 2025.[4] The subsequent lawsuit found that he was suffering from severe paranoia after he became obsessed with a ChatGPT-created AI chatbot he named “Bobby,” which reinforced his delusions.

Religious/Spiritual Delusions

The ability of AI chatbots to create logical reasoning without having a physical form can be taken as metaphysical. As a result, some users have developed the belief that AI is a God-like entity that they can refer to as a source of divine wisdom and guidance.

The British Broadcasting Corporation (BBC) published an entire editorial of multiple cases where people used AI as if they were talking to God, and this theme is consistent across many different religions.[5] And again, using the nature of AI to constantly reaffirm such delusions can just make the psychosis stronger.

Why Do AI Chatbots Lead to Delusional Thinking?

A previously published study in JMIR Mental Health describes the four main mechanisms by which AI chatbots lead to delusional thinking. They include:[6]

1. The chatbot itself acting as a mental health stressor due to its 24/7 availability and emotional responsiveness.

A person can get addicted to engaging in conversations with a chatbot to a point where they compromise on their sleep, work, and so on. This can eventually cause them to become mentally overwhelmed.

2. Forming a deep, emotional bond with the chatbot due to its reassuring responses.

Chatbots can make you feel heard and understood. This is known as a “digital therapeutic alliance.” Instead of helping someone question a paranoid belief, the AI can instead make them feel more certain it is true.

3. Beginning to treat AI like it’s a conscious being.

Those who have difficulty judging other people’s intentions may tend to believe the chatbot is a real thinking partner with special understanding.

4. Pre-existing mental health issues.

Delusional thinking patterns due to AI are more likely to occur with concurrent risk factors that include loneliness, past trauma, and schizotypal personality. Additionally, using AI alone at night and certain algorithms that keep showing information that matches existing beliefs may compound issues.

Aside from these factors, sycophancy is a well-known feature of AI chatbots. This is the tendency of AI to agree with you rather than correct you, which produces better user engagement with the tool.[7]

A study measured the presence of sycophancy in 11 large language models and found that AI models were 50% more likely than humans to reinforce unethical, illegal, or harmful behaviors.[8]

Also, memory features in AI help it retrieve information you have shared with it in previous conversations. When it gives reference to some of your personal information shared earlier in a conversation, it could make you think that the tool is deeply personally involved in your life. Simply put, it gives you a false feeling that the AI “feels” with you.

How to Prevent Delusional Thinking Through AI Psychoeducation

“AI psychoeducation” is a broad term used for therapeutic work that reinforces some “friendly skepticism” towards LLMs. It teaches you to understand how these models work as text-prediction machines, and that they do not have any real thought.

Therefore, this type of education suggests that AI should not be used for any emotional conversations. If you keep your interaction with AI limited to work, you are less likely to develop distorted thinking patterns.

Here are some tips that can help you keep your mental health intact while you keep using AI for work purposes:

- Limit the time you spend with AI chatbots. You should not be talking to one 24/7.

- Remind yourself frequently that AI is just a machine running on probability predictions. It does not have any capacity to replace a human interaction, such as that with a friend, family member, therapist, or so on

- Avoid conversations about conspiracy theories or deeply personal, ambiguous scenarios of existential dread that may cause AI to feed you delusional thoughts.

- Never ask AI for suggestions when making a major life decision. Refer to a friend or a mentor to get reasonable advice.

- Keep a strict check on your real-world human connections. If you feel like you are isolated, this may be an early sign of AI dependency.

Complete the form to receive a prompt call back from a member of our experienced and compassionate admissions staff. All communication is 100% confidential.

"*" indicates required fields

When to Seek Help for Delusional Thinking

If you, or anyone you know, has been feeling “off” due to thinking patterns that don’t make sense to other people, it may be time to consider professional help. Those with a concurrent mental health diagnosis are at major risk of delusional thinking due to AI, and they may need supervised mental health treatment to get out of it.

Although AI psychosis and delusions are not officially recognized DSM-5 diagnoses yet, they are very real issues that require compassionate psychiatric care similar to a delusional disorder.

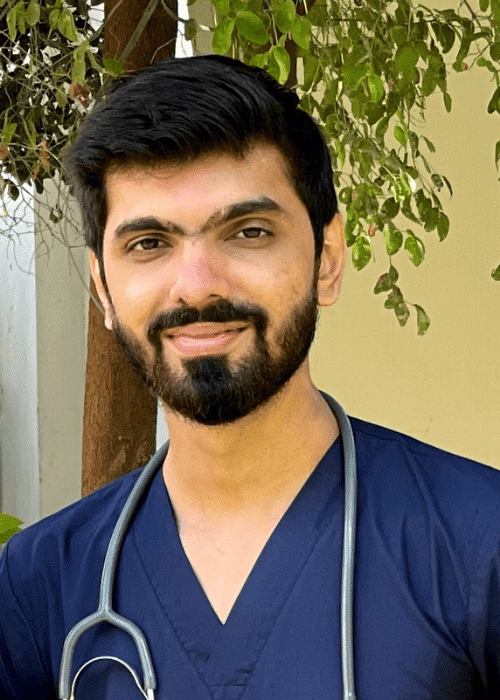

AMFM (A Mission For Michael) Mental Health Treatment delivers evidence-based mental health care at our residential treatment locations in California, Minnesota, and Virginia. In addition to residential care, we also offer outpatient programming and telehealth.

We are in-network with most insurance providers, and same-day admission is possible. Contact us to learn more about how we can help with your symptoms. Better mental health can start with a simple, real-life conversation. Call 866-478-4383.